Projects

Here you can find all the projects I have been working on.

Here you can find all the projects I have been working on.

How does this website work? How did I deploy it? - AI - contact - js - html and css - deployment - host domain - difficulties etc.. - imporvements - what I learned

In development — a semantic search app built on a large database of TripAdvisor reviews for Paris. Users can paste a review or a short description, and the app returns similar places by comparing the query to precomputed embeddings of reviews and venue content. The goal is meaning-based retrieval (not just keywords) to surface the most relevant matches fast.

This goup project is still in development. It’s an end-to-end emergency-monitoring platform built around SENAPRED, developed at ESILV in cooperation with the Chilean government. Pipeline (tweet extraction): a continuous ingestion workflow that collects relevant tweets in near real time, normalizes/cleans the content, enriches it with useful metadata, and prepares it for downstream processing. Backend: a central data layer backed by Firestore to store events, tweets, and derived signals, plus services that run data processing and analytics (aggregation, trends, filters) to make the information usable. Frontend: a web interface that displays both data analysis dashboards (e.g., trends, breakdowns, summaries) and operational information (alerts/context) so stakeholders can quickly understand what’s happening. Cloud infrastructure: deployed on Google Cloud, leveraging managed services to make the system scalable, reliable, and easier to operate (hosting, scheduling/automation, and secure access patterns). You can check out our advancements on the following repos: https://github.com/leonard-de-vinci/senapred-pipeline https://github.com/leonard-de-vinci/senapred-frontend https://github.com/leonard-de-vinci/senapred-backend

On this website, I built a Retrieval-Augmented Generation (RAG) assistant that answers questions using my own portfolio content (project descriptions, tags, README files, plus a public “About Max” knowledge file). Its purpose is practical: it helps visitors (especially recruiters and engineers) understand each project quickly, and it’s also meant to support people who try to recreate the work—for example if they run into issues installing dependencies, running the code, reproducing results, or following setup instructions. The system is scope-aware: on the home page, it can retrieve from the global knowledge base (e.g., “About Max” + general portfolio content), but on each individual project page, retrieval is hard-filtered to that project only—meaning the chatbot searches only the embeddings/chunks attached to that specific project (project_id filter), so content from other projects cannot “bleed” into the answer. When you ask a question, the system first runs a quality gate to detect short/generic inputs (e.g., “hello”) and routes them to a normal LLM response instead of forcing retrieval. For meaningful queries, it generates an embedding for the question, retrieves the most relevant chunks using a hybrid search (dense semantic similarity + BM25 keyword scoring), and applies a second gate that rejects weak matches to avoid irrelevant context. If retrieval is strong, it injects only the selected snippets into the prompt and the model answers strictly from the provided context—and explicitly says when the context doesn’t contain the answer. The UI also exposes a “top match” score so you can see retrieval confidence in real time. To validate behavior, I created an automated test suite (rag_run_tests) that checks both bypass cases (generic greetings should not trigger RAG) and grounded Q&A (project-specific questions must retrieve only that project’s sources; “About Max” questions must retrieve only the public bio knowledge). The result is a portfolio chatbot that is transparent, testable, and designed to avoid hallucinations by defaulting to general LLM behavior when retrieval evidence is weak—and by enforcing strict per-page retrieval boundaries on project pages. You can download a more specific readme file here.

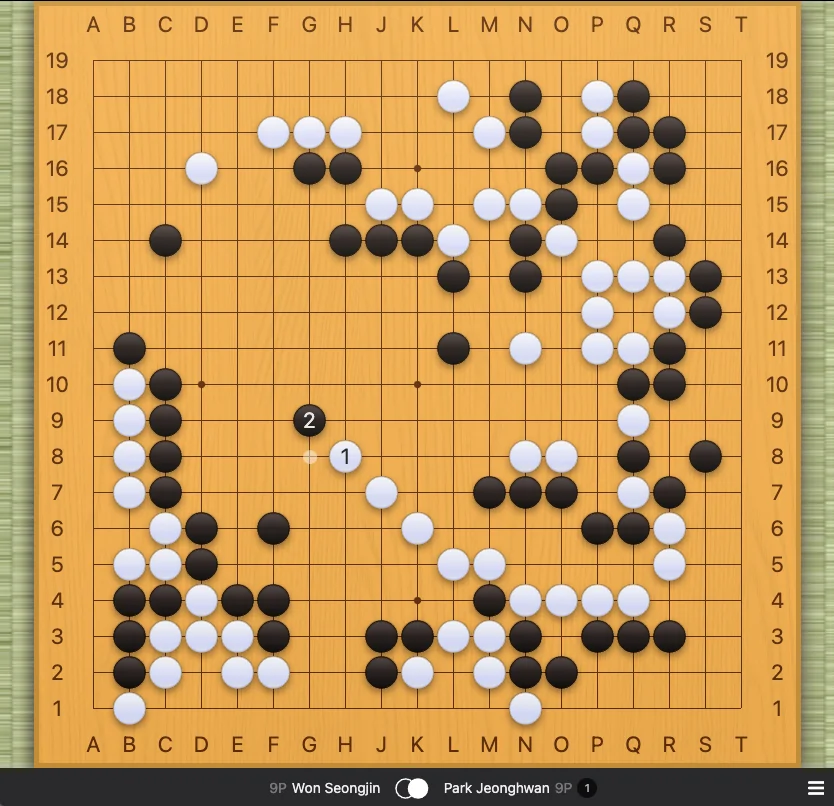

Built a Gomoku (Five-in-a-Row) AI that can play against a human (and also run AI vs AI tests). The project focuses on real decision-making: rule validation, pattern-based threat detection (win/block, open-threes, double attacks), and a stronger search layer using Minimax with alpha–beta pruning + iterative deepening, backed by a heuristic board evaluation to choose the best move efficiently on a 15×15 board. You can download the Readme file or go to the github repository below.

My ANSSI CVE Alert project is basically a small automation that checks ANSSI / CERT-FR for new security advisories and CVEs and pings me immediately when something new drops, so I don’t have to manually refresh the site all the time. Technically, it’s made of a data source (CERT-FR pages/feeds), a collector (scraping or API calls) that pulls the latest advisories + info (CVE ID, title, severity, link, date), a dedup / change check that compares with what I’ve already seen so I don’t get spammed, a notification part (like Telegram/email) that sends a clean alert, and a scheduled run setup (cron, optionally Docker) so it can run 24/7 on a Raspberry Pi or a server, with a small local state file (like session.json) to remember what was already alerted.

A lightweight automation tool that monitors the ESILV presence portal and notifies the user when attendance validation becomes available. This project was built to prevent missed attendance confirmations by automatically detecting when a presence call opens and sending a notification.